Difficulty level

Course:

This lesson provides an overview of the database of Genotypes and Phenotypes (dbGaP), which was developed to archive and distribute the data and results from studies that have investigated the interaction of genotype and phenotype in humans.

Difficulty level: Beginner

Duration: 48:22

Speaker: : Michael Feolo

Course:

This lecture covers modeling the neuron in silicon, modeling vision and audition, and sensory fusion using a deep network.

Difficulty level: Beginner

Duration: 1:32:17

Speaker: : Shih-Chii Liu

This lesson gives an overview of past and present neurocomputing approaches and hybrid analog/digital circuits that directly emulate the properties of neurons and synapses.

Difficulty level: Beginner

Duration: 41:57

Speaker: : Giacomo Indiveri

Presentation of the Brian neural simulator, where models are defined directly by their mathematical equations and code is automatically generated for each specific target.

Difficulty level: Beginner

Duration: 20:39

Speaker: : Giacomo Indiveri

This lecture covers the NIDM data format within BIDS to make your datasets more searchable, and how to optimize your dataset searches.

Difficulty level: Beginner

Duration: 12:33

Speaker: : David Keator

This lecture covers positron emission tomography (PET) imaging and the Brain Imaging Data Structure (BIDS), and how they work together within the PET-BIDS standard to make neuroscience more open and FAIR.

Difficulty level: Beginner

Duration: 12:06

Speaker: : Melanie Ganz

This lecture discusses the FAIR principles as they apply to electrophysiology data and metadata, the building blocks for community tools and standards, platforms and grassroots initiatives, and the challenges therein.

Difficulty level: Beginner

Duration: 8:11

Speaker: : Thomas Wachtler

This lecture discusses how to standardize electrophysiology data organization to move towards being more FAIR.

Difficulty level: Beginner

Duration: 15:51

Speaker: : Sylvain Takerkart

This lecture covers advanced concept of energy based models. The lecture is a part of the Advanced energy based models modules of the the Deep Learning Course at NYU's Center for Data Science. Prerequisites for this course include: Energy-Based Models I, Energy-Based Models II, Energy-Based Models III, and an Introduction to Data Science or a Graduate Level Machine Learning course.

Difficulty level: Beginner

Duration: 56:41

Speaker: : Alfredo Canziani

Course:

This lesson gives an introduction to deep learning, with a perspective via inductive biases and emphasis on correctly matching deep learning to the right research questions.

Difficulty level: Beginner

Duration: 01:35:12

Speaker: : Blake Richards

Course:

As a part of NeuroHackademy 2021, Noah Benson gives an introduction to Pytorch, one of the two most common software packages for deep learning applications to the neurosciences.

Difficulty level: Beginner

Duration: 00:50:40

Speaker: :

Course:

Learn how to use TensorFlow 2.0 in this full tutorial for beginners. This course is designed for Python programmers looking to enhance their knowledge and skills in machine learning and artificial intelligence.

Throughout the 8 modules in this course you will learn about fundamental concepts and methods in ML & AI like core learning algorithms, deep learning with neural networks, computer vision with convolutional neural networks, natural language processing with recurrent neural networks, and reinforcement learning.

Difficulty level: Beginner

Duration: 06:52:07

Speaker: :

Course:

In this hands-on tutorial, Dr. Robert Guangyu Yang works through a number of coding exercises to see how RNNs can be easily used to study cognitive neuroscience questions, with a quick demonstration of how we can train and analyze RNNs on various cognitive neuroscience tasks. Familiarity of Python and basic knowledge of Pytorch are assumed.

Difficulty level: Beginner

Duration: 00:26:38

Speaker: :

Course:

This lecture covers the description and characterization of an input-output relationship in a information-theoretic context.

Difficulty level: Beginner

Duration: 1:35:33

Speaker: : Jonathan D. Victor

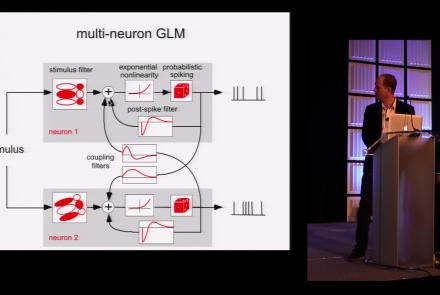

This lesson is part 1 of 2 of a tutorial on statistical models for neural data.

Difficulty level: Beginner

Duration: 1:45:48

Speaker: : Jonathan Pillow

This lesson is part 2 of 2 of a tutorial on statistical models for neural data.

Difficulty level: Beginner

Duration: 1:50:31

Speaker: : Jonathan Pillow

Course:

From the retina to the superior colliculus, the lateral geniculate nucleus into primary visual cortex and beyond, this lecture gives a tour of the mammalian visual system highlighting the Nobel-prize winning discoveries of Hubel & Wiesel.

Difficulty level: Beginner

Duration: 56:31

Speaker: : Clay Reid

Course:

From Universal Turing Machines to McCulloch-Pitts and Hopfield associative memory networks, this lecture explains what is meant by computation.

Difficulty level: Beginner

Duration: 55:27

Speaker: : Christof Koch

In this lesson you will learn about ion channels and the movement of ions across the cell membrane, one of the key mechanisms underlying neuronal communication.

Difficulty level: Beginner

Duration: 25:51

Speaker: : Carl Petersen

Course:

How does the brain learn? This lecture discusses the roles of development and adult plasticity in shaping functional connectivity.

Difficulty level: Beginner

Duration: 1:08:45

Speaker: : Clay Reid

Topics

- Artificial Intelligence (6)

- Philosophy of Science (5)

- Provenance (2)

- protein-protein interactions (1)

- Extracellular signaling (1)

- Animal models (6)

- Assembly 2021 (29)

- Brain-hardware interfaces (13)

- Clinical neuroscience (17)

- International Brain Initiative (2)

- Repositories and science gateways (11)

- Resources (6)

- General neuroscience

(45)

- Neuroscience (9)

- Cognitive Science (7)

- Cell signaling (3)

- Brain networks (4)

- (-) Glia (1)

- Electrophysiology (16)

- Learning and memory (3)

- Neuroanatomy (17)

- Neurobiology (7)

- Neurodegeneration (1)

- (-) Neuroimmunology (1)

- Neural networks (4)

- (-) Neurophysiology (22)

- Neuropharmacology (2)

- Synaptic plasticity (2)

- Visual system (12)

- Phenome (1)

- General neuroinformatics

(15)

- Computational neuroscience (195)

- Statistics (2)

- Computer Science (15)

- Genomics (26)

- Data science

(24)

- Open science (56)

- Project management (7)

- Education (3)

- Publishing (4)

- Neuroethics (37)