Course:

This lesson introduces the EEGLAB toolbox, as well as motivations for its use.

Difficulty level: Beginner

Duration: 15:32

Speaker: : Arnaud Delorme

Course:

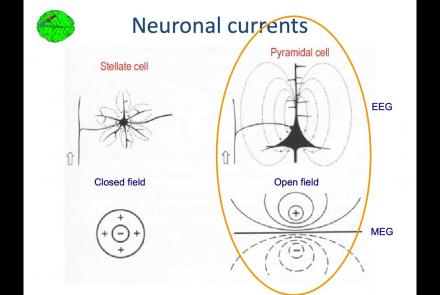

In this lesson, you will learn about the biological activity which generates and is measured by the EEG signal.

Difficulty level: Beginner

Duration: 6:53

Speaker: : Arnaud Delorme

Course:

This lesson goes over the characteristics of EEG signals when analyzed in source space (as opposed to sensor space).

Difficulty level: Beginner

Duration: 10:56

Speaker: : Arnaud Delorme

Course:

This lesson describes the development of EEGLAB as well as to what extent it is used by the research community.

Difficulty level: Beginner

Duration: 6:06

Speaker: : Arnaud Delorme

Course:

This lesson provides instruction as to how to build a processing pipeline in EEGLAB for a single participant.

Difficulty level: Beginner

Duration: 9:20

Speaker: :

Course:

Whereas the previous lesson of this course outlined how to build a processing pipeline for a single participant, this lesson discusses analysis pipelines for multiple participants simultaneously.

Difficulty level: Beginner

Duration: 10:55

Speaker: : Arnaud Delorme

Course:

In addition to outlining the motivations behind preprocessing EEG data in general, this lesson covers the first step in preprocessing data with EEGLAB, importing raw data.

Difficulty level: Beginner

Duration: 8:30

Speaker: : Arnaud Delorme

Course:

Continuing along the EEGLAB preprocessing pipeline, this tutorial walks users through how to import data events as well as EEG channel locations.

Difficulty level: Beginner

Duration: 11:53

Speaker: : Arnaud Delorme

Course:

This tutorial demonstrates how to re-reference and resample raw data in EEGLAB, why such steps are important or useful in the preprocessing pipeline, and how choices made at this step may affect subsequent analyses.

Difficulty level: Beginner

Duration: 11:48

Speaker: : Arnaud Delorme

Course:

In this tutorial, users learn about the various filtering options in EEGLAB, how to inspect channel properties for noisy signals, as well as how to filter out specific components of EEG data (e.g., electrical line noise).

Difficulty level: Beginner

Duration: 10:46

Speaker: : Arnaud Delorme

Course:

This tutorial instructs users how to visually inspect partially pre-processed neuroimaging data in EEGLAB, specifically how to use the data browser to investigate specific channels, epochs, or events for removable artifacts, biological (e.g., eye blinks, muscle movements, heartbeat) or otherwise (e.g., corrupt channel, line noise).

Difficulty level: Beginner

Duration: 5:08

Speaker: : Arnaud Delorme

Course:

This tutorial provides instruction on how to use EEGLAB to further preprocess EEG datasets by identifying and discarding bad channels which, if left unaddressed, can corrupt and confound subsequent analysis steps.

Difficulty level: Beginner

Duration: 13:01

Speaker: : Arnaud Delorme

Course:

Users following this tutorial will learn how to identify and discard bad EEG data segments using the MATLAB toolbox EEGLAB.

Difficulty level: Beginner

Duration: 11:25

Speaker: : Arnaud Delorme

This lecture gives an overview of how to prepare and preprocess neuroimaging (EEG/MEG) data for use in TVB.

Difficulty level: Intermediate

Duration: 1:40:52

Speaker: : Paul Triebkorn

Course:

This module covers many of the types of non-invasive neurotech and neuroimaging devices including electroencephalography (EEG), electromyography (EMG), electroneurography (ENG), magnetoencephalography (MEG), and more.

Difficulty level: Beginner

Duration: 13:36

Speaker: : Harrison Canning

Hierarchical Event Descriptors (HED) fill a major gap in the neuroinformatics standards toolkit, namely the specification of the nature(s) of events and time-limited conditions recorded as having occurred during time series recordings (EEG, MEG, iEEG, fMRI, etc.). Here, the HED Working Group presents an online INCF workshop on the need for, structure of, tools for, and use of HED annotation to prepare neuroimaging time series data for storing, sharing, and advanced analysis.

Difficulty level: Beginner

Duration: 03:37:42

Speaker: :

This lecture explains the concept of federated analysis in the context of medical data, associated challenges. The lecture also presents an example of hospital federations via the Medical Informatics Platform.

Difficulty level: Intermediate

Duration: 19:15

Speaker: : Yannis Ioannidis

This lesson continues with the second workshop on reproducible science, focusing on additional open source tools for researchers and data scientists, such as the R programming language for data science, as well as associated tools like RStudio and R Markdown. Additionally, users are introduced to Python and iPython notebooks, Google Colab, and are given hands-on tutorials on how to create a Binder environment, as well as various containers in Docker and Singularity.

Difficulty level: Beginner

Duration: 1:16:04

Speaker: : Erin Dickie and Sejal Patel

This lesson contains both a lecture and a tutorial component. The lecture (0:00-20:03 of YouTube video) discusses both the need for intersectional approaches in healthcare as well as the impact of neglecting intersectionality in patient populations. The lecture is followed by a practical tutorial in both Python and R on how to assess intersectional bias in datasets. Links to relevant code and data are found below.

Difficulty level: Beginner

Duration: 52:26

This is a hands-on tutorial on PLINK, the open source whole genome association analysis toolset. The aims of this tutorial are to teach users how to perform basic quality control on genetic datasets, as well as to identify and understand GWAS summary statistics.

Difficulty level: Intermediate

Duration: 1:27:18

Speaker: : Dan Felsky

Topics

- Artificial Intelligence (7)

- Philosophy of Science (5)

- Provenance (3)

- protein-protein interactions (1)

- Extracellular signaling (1)

- Animal models (8)

- Assembly 2021 (29)

- Brain-hardware interfaces (14)

- Clinical neuroscience (40)

- International Brain Initiative (2)

- Repositories and science gateways (11)

- Resources (6)

- General neuroscience

(62)

- Neuroscience (11)

- Cognitive Science (7)

- Cell signaling (6)

- Brain networks (11)

- Glia (1)

- Electrophysiology (41)

- Learning and memory (5)

- Neuroanatomy (24)

- Neurobiology (16)

- Neurodegeneration (1)

- Neuroimmunology (1)

- Neural networks (15)

- Neurophysiology (27)

- Neuropharmacology (2)

- Neuronal plasticity (16)

- Synaptic plasticity (4)

- Visual system (12)

- Phenome (1)

- General neuroinformatics

(27)

- Computational neuroscience (279)

- Statistics (7)

- Computer Science (21)

- Genomics (34)

- Data science

(34)

- Open science (61)

- Project management (8)

- Education (4)

- Publishing (4)

- Neuroethics (42)