Lesson type

Difficulty level

This talk describes the NIH-funded SPARC Data Structure, and how this project navigates ontology development while keeping in mind the FAIR science principles.

Difficulty level: Beginner

Duration: 25:44

Speaker: : Fahim Imam

Course:

This lecture covers structured data, databases, federating neuroscience-relevant databases, and ontologies.

Difficulty level: Beginner

Duration: 1:30:45

Speaker: : Maryann Martone

Course:

This lecture covers FAIR atlases, including their background and construction, as well as how they can be created in line with the FAIR principles.

Difficulty level: Beginner

Duration: 14:24

Speaker: : Heidi Kleven

This lecture contains an overview of the Distributed Archives for Neurophysiology Data Integration (DANDI) archive, its ties to FAIR and open-source, integrations with other programs, and upcoming features.

Difficulty level: Beginner

Duration: 13:34

Speaker: : Yaroslav O. Halchenko

This lecture contains an overview of electrophysiology data reuse within the EBRAINS ecosystem.

Difficulty level: Beginner

Duration: 15:57

Speaker: : Andrew Davison

Course:

This lesson gives an introduction to the Mathematics chapter of Datalabcc's Foundations in Data Science series.

Difficulty level: Beginner

Duration: 2:53

Speaker: : Barton Poulson

Course:

This lesson serves a primer on elementary algebra.

Difficulty level: Beginner

Duration: 3:03

Speaker: : Barton Poulson

Course:

This lesson provides a primer on linear algebra, aiming to demonstrate how such operations are fundamental to many data science.

Difficulty level: Beginner

Duration: 5:38

Speaker: : Barton Poulson

Course:

In this lesson, users will learn about linear equation systems, as well as follow along some practical use cases.

Difficulty level: Beginner

Duration: 5:24

Speaker: : Barton Poulson

Course:

This talk gives a primer on calculus, emphasizing its role in data science.

Difficulty level: Beginner

Duration: 4:17

Speaker: : Barton Poulson

Course:

This lesson clarifies how calculus relates to optimization in a data science context.

Difficulty level: Beginner

Duration: 8:43

Speaker: : Barton Poulson

Course:

This lesson covers Big O notation, a mathematical notation that describes the limiting behavior of a function as it tends towards a certain value or infinity, proving useful for data scientists who want to evaluate their algorithms' efficiency.

Difficulty level: Beginner

Duration: 5:19

Speaker: : Barton Poulson

Course:

This lesson serves as a primer on the fundamental concepts underlying probability.

Difficulty level: Beginner

Duration: 7:33

Speaker: : Barton Poulson

Serving as good refresher, this lesson explains the maths and logic concepts that are important for programmers to understand, including sets, propositional logic, conditional statements, and more.

This compilation is courtesy of freeCodeCamp.

Difficulty level: Beginner

Duration: 1:00:07

Speaker: : Shawn Grooms

This lesson provides a useful refresher which will facilitate the use of Matlab, Octave, and various matrix-manipulation and machine-learning software.

This lesson was created by RootMath.

Difficulty level: Beginner

Duration: 1:21:30

Speaker: :

Course:

This lecture covers the description and characterization of an input-output relationship in a information-theoretic context.

Difficulty level: Beginner

Duration: 1:35:33

Speaker: : Jonathan D. Victor

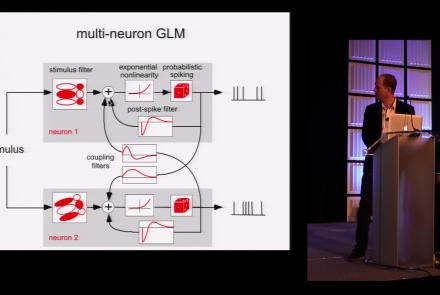

This lesson is part 1 of 2 of a tutorial on statistical models for neural data.

Difficulty level: Beginner

Duration: 1:45:48

Speaker: : Jonathan Pillow

This lesson is part 2 of 2 of a tutorial on statistical models for neural data.

Difficulty level: Beginner

Duration: 1:50:31

Speaker: : Jonathan Pillow

Course:

From the retina to the superior colliculus, the lateral geniculate nucleus into primary visual cortex and beyond, this lecture gives a tour of the mammalian visual system highlighting the Nobel-prize winning discoveries of Hubel & Wiesel.

Difficulty level: Beginner

Duration: 56:31

Speaker: : Clay Reid

Course:

From Universal Turing Machines to McCulloch-Pitts and Hopfield associative memory networks, this lecture explains what is meant by computation.

Difficulty level: Beginner

Duration: 55:27

Speaker: : Christof Koch

Topics

- Artificial Intelligence (6)

- Philosophy of Science (5)

- Provenance (2)

- protein-protein interactions (1)

- Extracellular signaling (1)

- Animal models (6)

- Assembly 2021 (29)

- Brain-hardware interfaces (13)

- Clinical neuroscience (17)

- International Brain Initiative (2)

- Repositories and science gateways (11)

- Resources (6)

- General neuroscience

(45)

- Neuroscience (9)

- Cognitive Science (7)

- Cell signaling (3)

- Brain networks (4)

- Glia (1)

- Electrophysiology (16)

- Learning and memory (3)

- Neuroanatomy (17)

- Neurobiology (7)

- (-) Neurodegeneration (1)

- Neuroimmunology (1)

- Neural networks (4)

- Neurophysiology (22)

- (-) Neuropharmacology (2)

- Synaptic plasticity (2)

- Visual system (12)

- Phenome (1)

- General neuroinformatics

(15)

- Computational neuroscience (192)

- Statistics (2)

- Computer Science (15)

- Genomics (26)

- Data science

(24)

- Open science (55)

- Project management (7)

- Education (3)

- Publishing (4)

- Neuroethics (35)