This lesson gives an in-depth description of scientific workflows, from study inception and planning to dissemination of results.

Difficulty level: Beginner

Duration: 44:41

Speaker: : Yaroslav O. Halchenko

Course:

This lesson gives an introductory presentation on how data science can help with scientific reproducibility.

Difficulty level: Beginner

Duration:

Speaker: : Michel Dumontier

This lecture discusses how FAIR practices affect personalized data models, including workflows, challenges, and how to improve these practices.

Difficulty level: Beginner

Duration: 13:16

Speaker: : Kelly Shen

This lecture covers how to make modeling workflows FAIR by working through a practical example, dissecting the steps within the workflow, and detailing the tools and resources used at each step.

Difficulty level: Beginner

Duration: 15:14

Speaker: : Salvador Dura-Bernal

This lesson introduces concepts and practices surrounding reference atlases for the mouse and rat brains. Additionally, this lesson provides discussion around examples of data systems employed to organize neuroscience data collections in the context of reference atlases as well as analytical workflows applied to the data.

Difficulty level: Beginner

Duration: 03:04:29

Speaker: :

Course:

This lesson gives an introduction to the Mathematics chapter of Datalabcc's Foundations in Data Science series.

Difficulty level: Beginner

Duration: 2:53

Speaker: : Barton Poulson

Course:

This lesson serves a primer on elementary algebra.

Difficulty level: Beginner

Duration: 3:03

Speaker: : Barton Poulson

Course:

This lesson provides a primer on linear algebra, aiming to demonstrate how such operations are fundamental to many data science.

Difficulty level: Beginner

Duration: 5:38

Speaker: : Barton Poulson

Course:

In this lesson, users will learn about linear equation systems, as well as follow along some practical use cases.

Difficulty level: Beginner

Duration: 5:24

Speaker: : Barton Poulson

Course:

This talk gives a primer on calculus, emphasizing its role in data science.

Difficulty level: Beginner

Duration: 4:17

Speaker: : Barton Poulson

Course:

This lesson clarifies how calculus relates to optimization in a data science context.

Difficulty level: Beginner

Duration: 8:43

Speaker: : Barton Poulson

Course:

This lesson covers Big O notation, a mathematical notation that describes the limiting behavior of a function as it tends towards a certain value or infinity, proving useful for data scientists who want to evaluate their algorithms' efficiency.

Difficulty level: Beginner

Duration: 5:19

Speaker: : Barton Poulson

Course:

This lesson serves as a primer on the fundamental concepts underlying probability.

Difficulty level: Beginner

Duration: 7:33

Speaker: : Barton Poulson

Serving as good refresher, this lesson explains the maths and logic concepts that are important for programmers to understand, including sets, propositional logic, conditional statements, and more.

This compilation is courtesy of freeCodeCamp.

Difficulty level: Beginner

Duration: 1:00:07

Speaker: : Shawn Grooms

This lesson provides a useful refresher which will facilitate the use of Matlab, Octave, and various matrix-manipulation and machine-learning software.

This lesson was created by RootMath.

Difficulty level: Beginner

Duration: 1:21:30

Speaker: :

Course:

This lecture covers the description and characterization of an input-output relationship in a information-theoretic context.

Difficulty level: Beginner

Duration: 1:35:33

Speaker: : Jonathan D. Victor

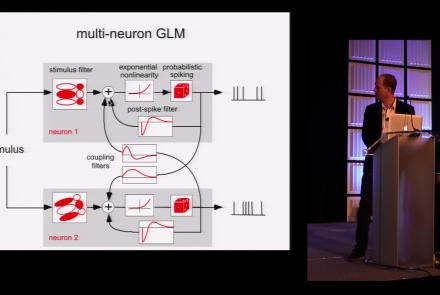

This lesson is part 1 of 2 of a tutorial on statistical models for neural data.

Difficulty level: Beginner

Duration: 1:45:48

Speaker: : Jonathan Pillow

This lesson is part 2 of 2 of a tutorial on statistical models for neural data.

Difficulty level: Beginner

Duration: 1:50:31

Speaker: : Jonathan Pillow

Course:

From the retina to the superior colliculus, the lateral geniculate nucleus into primary visual cortex and beyond, this lecture gives a tour of the mammalian visual system highlighting the Nobel-prize winning discoveries of Hubel & Wiesel.

Difficulty level: Beginner

Duration: 56:31

Speaker: : Clay Reid

Course:

From Universal Turing Machines to McCulloch-Pitts and Hopfield associative memory networks, this lecture explains what is meant by computation.

Difficulty level: Beginner

Duration: 55:27

Speaker: : Christof Koch

Topics

- Artificial Intelligence (6)

- Philosophy of Science (5)

- Provenance (2)

- protein-protein interactions (1)

- Extracellular signaling (1)

- Animal models (6)

- Assembly 2021 (29)

- Brain-hardware interfaces (13)

- Clinical neuroscience (17)

- International Brain Initiative (2)

- Repositories and science gateways (11)

- Resources (6)

- General neuroscience

(45)

- Neuroscience (9)

- Cognitive Science (7)

- Cell signaling (3)

- Brain networks (4)

- (-) Glia (1)

- Electrophysiology (16)

- (-) Learning and memory (3)

- Neuroanatomy (17)

- Neurobiology (7)

- Neurodegeneration (1)

- Neuroimmunology (1)

- Neural networks (4)

- (-) Neurophysiology (22)

- Neuropharmacology (2)

- Synaptic plasticity (2)

- Visual system (12)

- Phenome (1)

- General neuroinformatics

(15)

- Computational neuroscience (195)

- Statistics (2)

- Computer Science (15)

- Genomics (26)

- (-)

Data science

(24)

- Open science (56)

- Project management (7)

- Education (3)

- Publishing (4)

- (-) Neuroethics (37)