Research Resource Identifiers (RRIDs) are ID numbers assigned to help researchers cite key resources (e.g., antibodies, model organisms, and software projects) in biomedical literature to improve the transparency of research methods.

Difficulty level: Beginner

Duration: 1:01:36

Speaker: : Maryann Martone

This lecture provides an overview of successful open-access projects aimed at describing complex neuroscientific models, and makes a case for expanded use of resources in support of reproducibility and validation of models against experimental data.

Difficulty level: Beginner

Duration: 1:00:39

Speaker: : Sharon Crook

This lesson provides an overview of Neurodata Without Borders (NWB), an ecosystem for neurophysiology data standardization. The lecture also introduces some NWB-enabled tools.

Difficulty level: Beginner

Duration: 29:53

Speaker: : Oliver Ruebel

Course:

The Mouse Phenome Database (MPD) provides access to primary experimental trait data, genotypic variation, protocols and analysis tools for mouse genetic studies. Data are contributed by investigators worldwide and represent a broad scope of phenotyping endpoints and disease-related traits in naïve mice and those exposed to drugs, environmental agents or other treatments. MPD ensures rigorous curation of phenotype data and supporting documentation using relevant ontologies and controlled vocabularies. As a repository of curated and integrated data, MPD provides a means to access/re-use baseline data, as well as allows users to identify sensitized backgrounds for making new mouse models with genome editing technologies, analyze trait co-inheritance, benchmark assays in their own laboratories, and many other research applications. MPD’s primary source of funding is NIDA. For this reason, a majority of MPD data is neuro- and behavior-related.

Difficulty level: Beginner

Duration: 55:36

Speaker: : Elissa Chesler

This hands-on tutorial walks you through DataJoint platform, highlighting features and schema which can be used to build robost neuroscientific pipelines.

Difficulty level: Beginner

Duration: 26:06

Speaker: : Milagros Marin

This lecture discusses how FAIR practices affect personalized data models, including workflows, challenges, and how to improve these practices.

Difficulty level: Beginner

Duration: 13:16

Speaker: : Kelly Shen

This lecture covers how to make modeling workflows FAIR by working through a practical example, dissecting the steps within the workflow, and detailing the tools and resources used at each step.

Difficulty level: Beginner

Duration: 15:14

Speaker: : Salvador Dura-Bernal

This lesson aims to define computational neuroscience in general terms, while providing specific examples of highly successful computational neuroscience projects.

Difficulty level: Beginner

Duration: 59:21

Speaker: : Alla Borisyuk

Course:

An introduction to data management, manipulation, visualization, and analysis for neuroscience. Students will learn scientific programming in Python, and use this to work with example data from areas such as cognitive-behavioral research, single-cell recording, EEG, and structural and functional MRI. Basic signal processing techniques including filtering are covered. The course includes a Jupyter Notebook and video tutorials.

Difficulty level: Beginner

Duration: 1:09:16

Speaker: : Aaron J. Newman

This lecture covers the history of behaviorism and the ultimate challenge to behaviorism.

Difficulty level: Beginner

Duration: 1:19:08

Speaker: : Paul F.M.J. Verschure

This lecture covers various learning theories.

Difficulty level: Beginner

Duration: 1:00:42

Speaker: : Paul F.M.J. Verschure

This lecture covers the emergence of cognitive science after the Second World War as an interdisciplinary field for studying the mind, with influences from anthropology, cybernetics, and artificial intelligence.

Difficulty level: Beginner

Duration: 51:07

Speaker: : Paul F.M.J. Verschure

This lesson continues with the second workshop on reproducible science, focusing on additional open source tools for researchers and data scientists, such as the R programming language for data science, as well as associated tools like RStudio and R Markdown. Additionally, users are introduced to Python and iPython notebooks, Google Colab, and are given hands-on tutorials on how to create a Binder environment, as well as various containers in Docker and Singularity.

Difficulty level: Beginner

Duration: 1:16:04

Speaker: : Erin Dickie and Sejal Patel

This lesson contains both a lecture and a tutorial component. The lecture (0:00-20:03 of YouTube video) discusses both the need for intersectional approaches in healthcare as well as the impact of neglecting intersectionality in patient populations. The lecture is followed by a practical tutorial in both Python and R on how to assess intersectional bias in datasets. Links to relevant code and data are found below.

Difficulty level: Beginner

Duration: 52:26

In this hands-on session, you will learn how to explore and work with DataLad datasets, containers, and structures using Jupyter notebooks.

Difficulty level: Beginner

Duration: 58:05

Speaker: : Michał Szczepanik

This lesson provides a thorough description of neuroimaging development over time, both conceptually and technologically. You will learn about the fundamentals of imaging techniques such as MRI and PET, as well as how the resultant data may be used to generate novel data visualization schemas.

Difficulty level: Beginner

Duration: 1:43:57

Speaker: : Jack Van Horn

This lecture covers a wide range of aspects regarding neuroinformatics and data governance, describing both their historical developments and current trajectories. Particular tools, platforms, and standards to make your research more FAIR are also discussed.

Difficulty level: Beginner

Duration: 54:58

Speaker: : Franco Pestilli

Course:

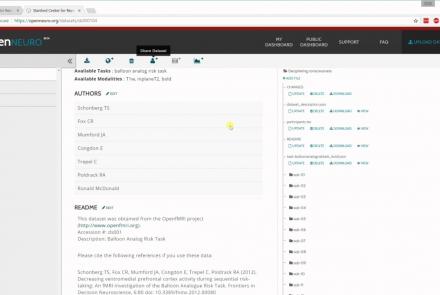

In this tutorial, you will learn the basic features of uploading and versioning your data within OpenNeuro.org.

Difficulty level: Beginner

Duration: 5:36

Speaker: : OpenNeuro

Course:

This tutorial shows how to share your data in OpenNeuro.org.

Difficulty level: Beginner

Duration: 1:22

Speaker: : OpenNeuro

Course:

Following the previous two tutorials on uploading and sharing data with OpenNeuro.org, this tutorial briefly covers how to run various analyses on your datasets.

Difficulty level: Beginner

Duration: 2:26

Speaker: : OpenNeuro

Topics

- Artificial Intelligence (5)

- Philosophy of Science (5)

- Notebooks (2)

- Provenance (2)

- protein-protein interactions (1)

- Extracellular signaling (1)

- Animal models (2)

- Assembly 2021 (28)

- Brain-hardware interfaces (13)

- Clinical neuroscience (3)

- International Brain Initiative (2)

- Repositories and science gateways (6)

- Resources (6)

- General neuroscience

(10)

- Phenome (1)

- General neuroinformatics

(4)

- Computational neuroscience (90)

- Statistics (2)

- Computer Science (8)

- Genomics (23)

- Data science

(18)

- (-) Open science (24)

- Project management (6)

- Education (2)

- Neuroethics (25)