This lesson provides instructions on how to build and share extensions in NWB.

Difficulty level: Advanced

Duration: 20:29

Speaker: : Ryan Ly

Learn how to build custom APIs for extension.

Difficulty level: Advanced

Duration: 25:40

Speaker: : Andrew Tritt

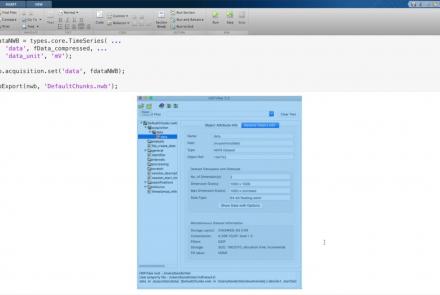

This lesson provides instruction on advanced writing strategies in HDF5 that are accessible through PyNWB.

Difficulty level: Advanced

Duration: 23:00

Speaker: : Oliver Ruebel

This lesson provides a tutorial on how to handle writing very large data in MatNWB.

Difficulty level: Advanced

Duration: 16:18

Speaker: : Ben Dichter

Course:

This lesson provides an introduction to biologically detailed computational modelling of neural dynamics, including neuron membrane potential simulation and F-I curves.

Difficulty level: Intermediate

Duration: 8:21

Speaker: : Mike X. Cohen

Course:

In this lesson, users learn how to use MATLAB to build an adaptive exponential integrate and fire (AdEx) neuron model.

Difficulty level: Intermediate

Duration: 22:01

Speaker: : Mike X. Cohen

Course:

In this lesson, users learn about the practical differences between MATLAB scripts and functions, as well as how to embed their neuronal simulation into a callable function.

Difficulty level: Intermediate

Duration: 11:20

Speaker: : Mike X. Cohen

Course:

This lesson teaches users how to generate a frequency-current (F-I) curve, which describes the function that relates the net synaptic current (I) flowing into a neuron to its firing rate (F).

Difficulty level: Intermediate

Duration: 20:39

Speaker: : Mike X. Cohen

This talk gives an overview of the Human Brain Project, a 10-year endeavour putting in place a cutting-edge research infrastructure that will allow scientific and industrial researchers to advance our knowledge in the fields of neuroscience, computing, and brain-related medicine.

Difficulty level: Intermediate

Duration: 24:52

Speaker: : Katrin Amunts

This lecture gives an introduction to the European Academy of Neurology, its recent achievements and ambitions.

Difficulty level: Intermediate

Duration: 21:57

Speaker: : Paul Boon

This lesson breaks down the principles of Bayesian inference and how it relates to cognitive processes and functions like learning and perception. It is then explained how cognitive models can be built using Bayesian statistics in order to investigate how our brains interface with their environment.

This lesson corresponds to slides 1-64 in the PDF below.

Difficulty level: Intermediate

Duration: 1:28:14

Speaker: : Andreea Diaconescu

This is a tutorial on designing a Bayesian inference model to map belief trajectories, with emphasis on gaining familiarity with Hierarchical Gaussian Filters (HGFs).

This lesson corresponds to slides 65-90 of the PDF below.

Difficulty level: Intermediate

Duration: 1:15:04

Speaker: : Daniel Hauke

This lesson delves into the the structure of one of the brain's most elemental computational units, the neuron, and how said structure influences computational neural network models.

Difficulty level: Intermediate

Duration: 6:33

Speaker: : Marcus Ghosh

In this lesson you will learn how machine learners and neuroscientists construct abstract computational models based on various neurophysiological signalling properties.

Difficulty level: Intermediate

Duration: 10:52

Speaker: : Dan Goodman

While the previous lesson in the Neuro4ML course dealt with the mechanisms involved in individual synapses, this lesson discusses how synapses and their neurons' firing patterns may change over time.

Difficulty level: Intermediate

Duration: 4:48

Speaker: : Marcus Ghosh

This lesson introduces some practical exercises which accompany the Synapses and Networks portion of this Neuroscience for Machine Learners course.

Difficulty level: Intermediate

Duration: 3:51

Speaker: : Dan Goodman

This lesson introduces the practical exercises which accompany the previous lessons on animal and human connectomes in the brain and nervous system.

Difficulty level: Intermediate

Duration: 4:10

Speaker: : Dan Goodman

This lesson describes spike timing-dependent plasticity (STDP), a biological process that adjusts the strength of connections between neurons in the brain, and how one can implement or mimic this process in a computational model. You will also find links for practical exercises at the bottom of this page.

Difficulty level: Intermediate

Duration: 12:50

Speaker: : Dan Goodman

In this lesson, you will learn about some of the many methods to train spiking neural networks (SNNs) with either no attempt to use gradients, or only use gradients in a limited or constrained way.

Difficulty level: Intermediate

Duration: 5:14

Speaker: : Dan Goodman

In this lesson, you will learn how to train spiking neural networks (SNNs) with a surrogate gradient method.

Difficulty level: Intermediate

Duration: 11:23

Speaker: : Dan Goodman

Topics

- Artificial Intelligence (1)

- Provenance (1)

- EBRAINS RI (6)

- Animal models (1)

- Brain-hardware interfaces (1)

- Clinical neuroscience (20)

- General neuroscience

(16)

- General neuroinformatics (12)

- (-) Computational neuroscience (44)

- Statistics (5)

- Computer Science (4)

- Genomics (8)

- Data science

(9)

- Open science (5)

- Project management (1)

- Neuroethics (3)