This is the first of two workshops on reproducibility in science, during which participants are introduced to concepts of FAIR and open science. After discussing the definition of and need for FAIR science, participants are walked through tutorials on installing and using Github and Docker, the powerful, open-source tools for versioning and publishing code and software, respectively.

Difficulty level: Intermediate

Duration: 1:20:58

Speaker: : Erin Dickie and Sejal Patel

This lesson contains both a lecture and a tutorial component. The lecture (0:00-20:03 of YouTube video) discusses both the need for intersectional approaches in healthcare as well as the impact of neglecting intersectionality in patient populations. The lecture is followed by a practical tutorial in both Python and R on how to assess intersectional bias in datasets. Links to relevant code and data are found below.

Difficulty level: Beginner

Duration: 52:26

This is a hands-on tutorial on PLINK, the open source whole genome association analysis toolset. The aims of this tutorial are to teach users how to perform basic quality control on genetic datasets, as well as to identify and understand GWAS summary statistics.

Difficulty level: Intermediate

Duration: 1:27:18

Speaker: : Dan Felsky

This is a tutorial on using the open-source software PRSice to calculate a set of polygenic risk scores (PRS) for a study sample. Users will also learn how to read PRS into R, visualize distributions, and perform basic association analyses.

Difficulty level: Intermediate

Duration: 1:53:34

Speaker: : Dan Felsky

Course:

This tutorial introduces pipelines and methods to compute brain connectomes from fMRI data. With corresponding code and repositories, participants can follow along and learn how to programmatically preprocess, curate, and analyze functional and structural brain data to produce connectivity matrices.

Difficulty level: Intermediate

Duration: 1:39:04

Speaker: : Erin Dickie and John Griffiths

This is a tutorial on designing a Bayesian inference model to map belief trajectories, with emphasis on gaining familiarity with Hierarchical Gaussian Filters (HGFs).

This lesson corresponds to slides 65-90 of the PDF below.

Difficulty level: Intermediate

Duration: 1:15:04

Speaker: : Daniel Hauke

This lesson introduces the practical exercises which accompany the previous lessons on animal and human connectomes in the brain and nervous system.

Difficulty level: Intermediate

Duration: 4:10

Speaker: : Dan Goodman

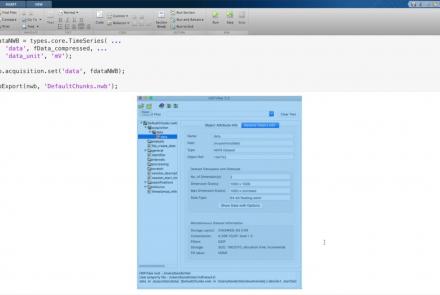

This lesson provides a tutorial on how to handle writing very large data in MatNWB.

Difficulty level: Advanced

Duration: 16:18

Speaker: : Ben Dichter

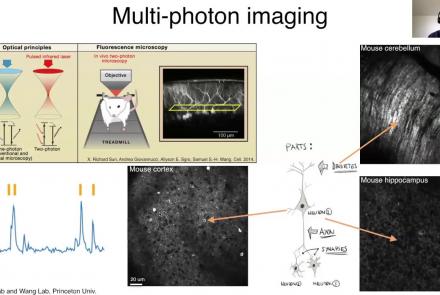

This lesson provides an overview of the CaImAn package, as well as a demonstration of usage with NWB.

Difficulty level: Intermediate

Duration: 44:37

Speaker: : Andrea Giovannucci

This lesson gives an overview of the SpikeInterface package, including demonstration of data loading, preprocessing, spike sorting, and comparison of spike sorters.

Difficulty level: Intermediate

Duration: 1:10:28

Speaker: : Alessio Buccino

In this lesson, users will learn about the NWBWidgets package, including coverage of different data types, and information for building custom widgets within this framework.

Difficulty level: Intermediate

Duration: 47:15

Speaker: : Ben Dichter

Course:

This lesson provides a comprehensive introduction to the command line and 50 popular Linux commands. This is a long introduction (nearly 5 hours), but well worth it if you are going to spend a good part of your career working from a terminal, which is likely if you are interested in flexibility, power, and reproducibility in neuroscience research. This lesson is courtesy of freeCodeCamp.

Difficulty level: Beginner

Duration: 5:00:16

Speaker: : Colt Steele

This lesson provides a hands-on tutorial for generating simulated brain data within the EBRAINS ecosystem.

Difficulty level: Beginner

Duration: 32:58

Speaker: : Jil Meier

This lecture goes into detailed description of how to process workflows in the virtual research environment (VRE), including approaches for standardization, metadata, containerization, and constructing and maintaining scientific pipelines.

Difficulty level: Intermediate

Duration: 1:03:55

Speaker: : Patrik Bey

This lesson provides an overview of how to conceptualize, design, implement, and maintain neuroscientific pipelines in via the cloud-based computational reproducibility platform Code Ocean.

Difficulty level: Beginner

Duration: 17:01

Speaker: : David Feng

In this workshop talk, you will receive a tour of the Code Ocean ScienceOps Platform, a centralized cloud workspace for all teams.

Difficulty level: Beginner

Duration: 10:24

Speaker: : Frank Zappulla

This lesson provides an overview of how to construct computational pipelines for neurophysiological data using DataJoint.

Difficulty level: Beginner

Duration: 17:37

Speaker: : Dimitri Yatsenko

This talk describes approaches to maintaining integrated workflows and data management schema, taking advantage of the many open source, collaborative platforms already existing.

Difficulty level: Beginner

Duration: 15:15

Speaker: : Erik C. Johnson

This hands-on tutorial walks you through DataJoint platform, highlighting features and schema which can be used to build robost neuroscientific pipelines.

Difficulty level: Beginner

Duration: 26:06

Speaker: : Milagros Marin

In this third and final hands-on tutorial from the Research Workflows for Collaborative Neuroscience workshop, you will learn about workflow orchestration using open source tools like DataJoint and Flyte.

Difficulty level: Intermediate

Duration: 22:36

Speaker: : Daniel Xenes

Topics

- Standards and Best Practices (2)

- Bayesian networks (2)

- Machine learning (5)

- Animal models (2)

- Assembly 2021 (6)

- Brain-hardware interfaces (1)

- Clinical neuroscience (3)

- Repositories and science gateways (1)

- Resources (3)

- General neuroscience (12)

- Phenome (1)

- General neuroinformatics (1)

- Computational neuroscience (62)

- Statistics (4)

- (-) Computer Science (5)

- Genomics (27)

- Data science (18)

- (-) Open science (26)

- Project management (1)

- Education (2)

- Publishing (1)