This lesson gives an in-depth introduction of ethics in the field of artificial intelligence, particularly in the context of its impact on humans and public interest. As the healthcare sector becomes increasingly affected by the implementation of ever stronger AI algorithms, this lecture covers key interests which must be protected going forward, including privacy, consent, human autonomy, inclusiveness, and equity.

Difficulty level: Beginner

Duration: 1:22:06

Speaker: : Daniel Buchman

This lesson describes a definitional framework for fairness and health equity in the age of the algorithm. While acknowledging the impressive capability of machine learning to positively affect health equity, this talk outlines potential (and actual) pitfalls which come with such powerful tools, ultimately making the case for collaborative, interdisciplinary, and transparent science as a way to operationalize fairness in health equity.

Difficulty level: Beginner

Duration: 1:06:35

Speaker: : Laura Sikstrom

This lesson contains both a lecture and a tutorial component. The lecture (0:00-20:03 of YouTube video) discusses both the need for intersectional approaches in healthcare as well as the impact of neglecting intersectionality in patient populations. The lecture is followed by a practical tutorial in both Python and R on how to assess intersectional bias in datasets. Links to relevant code and data are found below.

Difficulty level: Beginner

Duration: 52:26

This lesson is an overview of transcriptomics, from fundamental concepts of the central dogma and RNA sequencing at the single-cell level, to how genetic expression underlies diversity in cell phenotypes.

Difficulty level: Intermediate

Duration: 1:29:08

Speaker: : Shreejoy Tripathy

Course:

This lesson describes the principles underlying functional magnetic resonance imaging (fMRI), diffusion-weighted imaging (DWI), tractography, and parcellation. These tools and concepts are explained in a broader context of neural connectivity and mental health.

Difficulty level: Intermediate

Duration: 1:47:22

Speaker: : Erin Dickie and John Griffiths

This lecture discusses what defines an integrative approach regarding research and methods, including various study designs and models which are appropriate choices when attempting to bridge data domains; a necessity when whole-person modelling.

Difficulty level: Beginner

Duration: 1:28:14

Speaker: : Dan Felsky

This lesson explores how researchers try to understand neural networks, particularly in the case of observing neural activity.

Difficulty level: Intermediate

Duration: 8:20

Speaker: : Marcus Ghosh

In this lesson, you will learn about one particular aspect of decision making: reaction times. In other words, how long does it take to take a decision based on a stream of information arriving continuously over time?

Difficulty level: Intermediate

Duration: 6:01

Speaker: : Dan Goodman

This lesson discusses a gripping neuroscientific question: why have neurons developed the discrete action potential, or spike, as a principle method of communication?

Difficulty level: Intermediate

Duration: 9:34

Speaker: : Dan Goodman

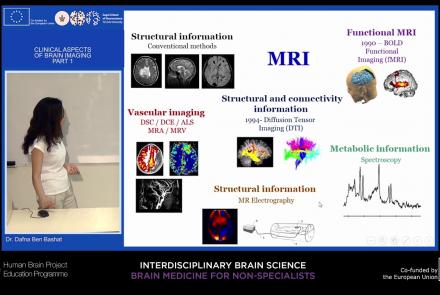

This lecture will provide an overview of neuroimaging techniques and their clinical applications.

Difficulty level: Beginner

Duration: 45:29

Speaker: : Dafna Ben Bashat

This lesson discusses both state-of-the-art detection and prevention schema in working with neurodegenerative diseases.

Difficulty level: Beginner

Duration: 1:02:29

Speaker: : Nir Giladi

This lecture gives an introduction to the types of glial cells, homeostasis (influence of cerebral blood flow and influence on neurons), insulation and protection of axons (myelin sheath; nodes of Ranvier), microglia and reactions of the CNS to injury.

Difficulty level: Beginner

Duration: 40:32

Speaker: : Christine Bandtlow

Course:

This lesson gives an introductory presentation on how data science can help with scientific reproducibility.

Difficulty level: Beginner

Duration:

Speaker: : Michel Dumontier

In this lesson, you will hear about the current challenges regarding data management, as well as policies and resources aimed to address them.

Difficulty level: Beginner

Duration: 18:13

Speaker: : Mojib Javadi

This lecture provides an introduction to the Brain Imaging Data Structure (BIDS), a standard for organizing human neuroimaging datasets.

Difficulty level: Intermediate

Duration: 56:49

Speaker: : Chris Gorgolewski

Course:

This lecture presents an overview of functional brain parcellations, as well as a set of tutorials on bootstrap agregation of stable clusters (BASC) for fMRI brain parcellation.

Difficulty level: Advanced

Duration: 50:28

Speaker: : Pierre Bellec

Course:

This lecture covers FAIR atlases, including their background and construction, as well as how they can be created in line with the FAIR principles.

Difficulty level: Beginner

Duration: 14:24

Speaker: : Heidi Kleven

Course:

This lecture covers the needs and challenges involved in creating a FAIR ecosystem for neuroimaging research.

Difficulty level: Beginner

Duration: 12:26

Speaker: : Camille Maumet

Course:

This lecture covers multiple aspects of FAIR neuroscience data: what makes it unique, the challenges to making it FAIR, the importance of overcoming these challenges, and how data governance comes into play.

Difficulty level: Beginner

Duration: 14:56

Speaker: : Damian Eke

This lecture covers how to make modeling workflows FAIR by working through a practical example, dissecting the steps within the workflow, and detailing the tools and resources used at each step.

Difficulty level: Beginner

Duration: 15:14

Speaker: : Salvador Dura-Bernal

Topics

- Philosophy of Science (5)

- Artificial Intelligence (4)

- Animal models (2)

- Assembly 2021 (26)

- Brain-hardware interfaces (2)

- Clinical neuroscience (11)

- International Brain Initiative (2)

- Repositories and science gateways (5)

- Resources (6)

- General neuroscience

(13)

- Cognitive Science (7)

- Cell signaling (3)

- Brain networks (5)

- (-) Glia (1)

- (-) Electrophysiology (8)

- Learning and memory (4)

- Neuroanatomy (3)

- Neurobiology (11)

- (-) Neurodegeneration (1)

- Neuroimmunology (1)

- Neural networks (11)

- Neurophysiology (4)

- Neuropharmacology (1)

- Neuronal plasticity (1)

- Synaptic plasticity (1)

- General neuroinformatics (12)

- Computational neuroscience (55)

- Statistics (3)

- Computer Science (4)

- Genomics (4)

- Data science (6)

- Open science (12)

- Project management (3)

- Education (1)

- (-) Neuroethics (6)