Course:

This lesson discusses FAIR principles and methods currently in development for assessing FAIRness.

Difficulty level: Beginner

Duration:

Speaker: : Michel Dumontier

Course:

This lesson describes the principles underlying functional magnetic resonance imaging (fMRI), diffusion-weighted imaging (DWI), tractography, and parcellation. These tools and concepts are explained in a broader context of neural connectivity and mental health.

Difficulty level: Intermediate

Duration: 1:47:22

Speaker: : Erin Dickie and John Griffiths

Course:

This lecture presents an overview of functional brain parcellations, as well as a set of tutorials on bootstrap agregation of stable clusters (BASC) for fMRI brain parcellation.

Difficulty level: Advanced

Duration: 50:28

Speaker: : Pierre Bellec

This lesson explores how researchers try to understand neural networks, particularly in the case of observing neural activity.

Difficulty level: Intermediate

Duration: 8:20

Speaker: : Marcus Ghosh

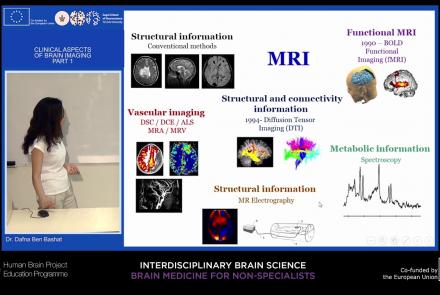

This lecture will provide an overview of neuroimaging techniques and their clinical applications.

Difficulty level: Beginner

Duration: 45:29

Speaker: : Dafna Ben Bashat

This lecture provides an introduction to the Brain Imaging Data Structure (BIDS), a standard for organizing human neuroimaging datasets.

Difficulty level: Intermediate

Duration: 56:49

Speaker: : Chris Gorgolewski

Course:

This lecture covers the needs and challenges involved in creating a FAIR ecosystem for neuroimaging research.

Difficulty level: Beginner

Duration: 12:26

Speaker: : Camille Maumet

This lecture covers the NIDM data format within BIDS to make your datasets more searchable, and how to optimize your dataset searches.

Difficulty level: Beginner

Duration: 12:33

Speaker: : David Keator

This lecture covers the processes, benefits, and challenges involved in designing, collecting, and sharing FAIR neuroscience datasets.

Difficulty level: Beginner

Duration: 11:35

Speaker: : Julie Boyle & Valentina Borghesani

This lecture covers positron emission tomography (PET) imaging and the Brain Imaging Data Structure (BIDS), and how they work together within the PET-BIDS standard to make neuroscience more open and FAIR.

Difficulty level: Beginner

Duration: 12:06

Speaker: : Melanie Ganz

This lecture covers the benefits and difficulties involved when re-using open datasets, and how metadata is important to the process.

Difficulty level: Beginner

Duration: 11:20

Speaker: : Elizabeth DuPre

This lecture provides guidance on the ethical considerations the clinical neuroimaging community faces when applying the FAIR principles to their research.

Difficulty level: Beginner

Duration: 13:11

Speaker: : Gustav Nilsonne

This lecture provides an introduction to the course "Cognitive Science & Psychology: Mind, Brain, and Behavior".

Difficulty level: Beginner

Duration: 1:06:49

Speaker: : Paul F.M.J. Verschure

This lesson covers the history of neuroscience and machine learning, and the story of how these two seemingly disparate fields are increasingly merging.

Difficulty level: Beginner

Duration: 12:25

Speaker: : Dan Goodman

In this lesson you will learn how machine learners and neuroscientists construct abstract computational models based on various neurophysiological signalling properties.

Difficulty level: Intermediate

Duration: 10:52

Speaker: : Dan Goodman

Whereas the previous two lessons described the biophysical and signalling properties of individual neurons, this lesson describes properties of those units when part of larger networks.

Difficulty level: Intermediate

Duration: 6:00

Speaker: : Marcus Ghosh

This lesson goes over some examples of how machine learners and computational neuroscientists go about designing and building neural network models inspired by biological brain systems.

Difficulty level: Intermediate

Duration: 12:52

Speaker: : Dan Goodman

In this lesson, you will learn about different approaches to modeling learning in neural networks, particularly focusing on system parameters such as firing rates and synaptic weights impact a network.

Difficulty level: Intermediate

Duration: 9:40

Speaker: : Dan Goodman

In this lesson, you will learn about some of the many methods to train spiking neural networks (SNNs) with either no attempt to use gradients, or only use gradients in a limited or constrained way.

Difficulty level: Intermediate

Duration: 5:14

Speaker: : Dan Goodman

In this lesson, you will learn how to train spiking neural networks (SNNs) with a surrogate gradient method.

Difficulty level: Intermediate

Duration: 11:23

Speaker: : Dan Goodman

Topics

- Philosophy of Science (5)

- Artificial Intelligence (4)

- Animal models (2)

- Assembly 2021 (26)

- Brain-hardware interfaces (2)

- Clinical neuroscience (11)

- International Brain Initiative (2)

- Repositories and science gateways (5)

- Resources (6)

- General neuroscience

(13)

- Cognitive Science (7)

- Cell signaling (3)

- Brain networks (5)

- Glia (1)

- Electrophysiology (8)

- Learning and memory (4)

- Neuroanatomy (3)

- Neurobiology (11)

- Neurodegeneration (1)

- Neuroimmunology (1)

- (-) Neural networks (11)

- Neurophysiology (4)

- Neuropharmacology (1)

- Neuronal plasticity (1)

- Synaptic plasticity (1)

- General neuroinformatics (12)

- Computational neuroscience (55)

- Statistics (3)

- Computer Science (4)

- Genomics (4)

- (-) Data science (6)

- Open science (12)

- Project management (3)

- Education (1)

- Neuroethics (6)