Lesson type

Difficulty level

This lecture covers a lot of post-war developments in the science of the mind, focusing first on the cognitive revolution, and concluding with living machines.

Difficulty level: Beginner

Duration: 2:24:35

Speaker: : Paul F.M.J. Verschure

This lesson delves into the the structure of one of the brain's most elemental computational units, the neuron, and how said structure influences computational neural network models.

Difficulty level: Intermediate

Duration: 6:33

Speaker: : Marcus Ghosh

In this lesson you will learn how machine learners and neuroscientists construct abstract computational models based on various neurophysiological signalling properties.

Difficulty level: Intermediate

Duration: 10:52

Speaker: : Dan Goodman

This lesson describes spike timing-dependent plasticity (STDP), a biological process that adjusts the strength of connections between neurons in the brain, and how one can implement or mimic this process in a computational model. You will also find links for practical exercises at the bottom of this page.

Difficulty level: Intermediate

Duration: 12:50

Speaker: : Dan Goodman

In this lesson, you will learn about some of the many methods to train spiking neural networks (SNNs) with either no attempt to use gradients, or only use gradients in a limited or constrained way.

Difficulty level: Intermediate

Duration: 5:14

Speaker: : Dan Goodman

In this lesson, you will learn how to train spiking neural networks (SNNs) with a surrogate gradient method.

Difficulty level: Intermediate

Duration: 11:23

Speaker: : Dan Goodman

In this lesson, you will hear about some of the open issues in the field of neuroscience, as well as a discussion about whether neuroscience works, and how can we know?

Difficulty level: Intermediate

Duration: 6:54

Speaker: : Marcus Ghosh

This lecture provides an overview of some of the essential concepts in neuropharmacology (e.g. receptor binding, agonism, antagonism), an introduction to pharmacodynamics and pharmacokinetics, and an overview of the drug discovery process relative to diseases of the central nervous system.

Difficulty level: Beginner

Duration: 45:47

Speaker: : Sandra Santos-Sierra

Course:

This lesson gives an introduction to simple spiking neuron models.

Difficulty level: Beginner

Duration: 48 Slides

Speaker: : Zubin Bhuyan

This lesson provides an introduction to simple spiking neuron models.

Difficulty level: Beginner

Duration: 48 Slides

Speaker: : Zubin Bhuyan

Course:

This lesson discusses FAIR principles and methods currently in development for assessing FAIRness.

Difficulty level: Beginner

Duration:

Speaker: : Michel Dumontier

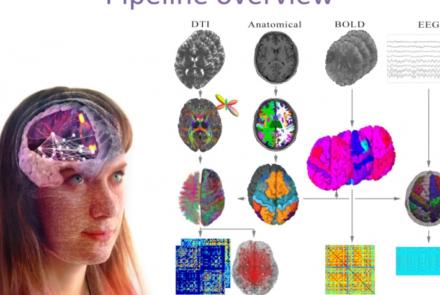

This presentation accompanies the paper entitled: An automated pipeline for constructing personalized virtual brains from multimodal neuroimaging data (see link below to download publication).

Difficulty level: Beginner

Duration: 4:56

This lecture on model types introduces the advantages of modeling, provide examples of different model types, and explain what modeling is all about.

Difficulty level: Beginner

Duration: 27:48

Speaker: : Gunnar Blohm

Course:

This lecture focuses on how to get from a scientific question to a model using concrete examples. We will present a 10-step practical guide on how to succeed in modeling. This lecture contains links to 2 tutorials, lecture/tutorial slides, suggested reading list, and 3 recorded Q&A sessions.

Difficulty level: Beginner

Duration: 29:52

Speaker: : Megan Peters

Course:

This lecture formalizes modeling as a decision process that is constrained by a precise problem statement and specific model goals. We provide real-life examples on how model building is usually less linear than presented in Modeling Practice I.

Difficulty level: Beginner

Duration: 22:51

Speaker: : Gunnar Blohm

Course:

This lecture focuses on the purpose of model fitting, approaches to model fitting, model fitting for linear models, and how to assess the quality and compare model fits. We will present a 10-step practical guide on how to succeed in modeling.

Difficulty level: Beginner

Duration: 26:46

Speaker: : Jan Drugowitsch

Course:

This lecture summarizes the concepts introduced in Model Fitting I and adds two additional concepts: 1) MLE is a frequentist way of looking at the data and the model, with its own limitations. 2) Side-by-side comparisons of bootstrapping and cross-validation.

Difficulty level: Beginner

Duration: 38.17

Speaker: : Kunlin Wei

This lecture provides an overview of the generalized linear models (GLM) course, originally a part of the Neuromatch Academy (NMA), an interactive online summer school held in 2020. NMA provided participants with experiences spanning from hands-on modeling experience to meta-science interpretation skills across just about everything that could reasonably be included in the label "computational neuroscience".

Difficulty level: Beginner

Duration: 33:58

Speaker: : Cristina Savin

This lecture further develops the concepts introduced in Machine Learning I. This lecture is part of the Neuromatch Academy (NMA), an interactive online computational neuroscience summer school held in 2020.

Difficulty level: Beginner

Duration: 29:30

Speaker: : I. Memming Park

Course:

This lecture introduces the core concepts of dimensionality reduction.

Difficulty level: Beginner

Duration: 31:43

Speaker: : Byron Yu

Topics

- Philosophy of Science (5)

- Artificial Intelligence (4)

- Animal models (2)

- Assembly 2021 (26)

- Brain-hardware interfaces (2)

- Clinical neuroscience (11)

- International Brain Initiative (2)

- Repositories and science gateways (5)

- Resources (6)

- General neuroscience

(13)

- Cognitive Science (7)

- Cell signaling (3)

- Brain networks (5)

- Glia (1)

- Electrophysiology (8)

- Learning and memory (4)

- Neuroanatomy (3)

- Neurobiology (11)

- Neurodegeneration (1)

- Neuroimmunology (1)

- Neural networks (11)

- Neurophysiology (4)

- (-) Neuropharmacology (1)

- Neuronal plasticity (1)

- Synaptic plasticity (1)

- General neuroinformatics (12)

- (-) Computational neuroscience (55)

- Statistics (3)

- Computer Science (4)

- Genomics (4)

- Data science (6)

- Open science (12)

- Project management (3)

- Education (1)

- Neuroethics (6)