Course:

This lecture presents an overview of functional brain parcellations, as well as a set of tutorials on bootstrap agregation of stable clusters (BASC) for fMRI brain parcellation.

Difficulty level: Advanced

Duration: 50:28

Speaker: : Pierre Bellec

This talk gives a brief overview of current efforts to collect and share the Brain Reference Architecture (BRA) data involved in the construction of a whole-brain architecture that assigns functions to major brain organs.

Difficulty level: Beginner

Duration: 4:02

Speaker: : Yoshimasa Tawatsuj

This brief talk discusses the idea that music, as a naturalistic stimulus, offers a window into higher cognition and various levels of neural architecture.

Difficulty level: Beginner

Duration: 4:04

Speaker: : Sarah Faber

In this short talk you will learn about The Neural System Laboratory, which aims to develop and implement new technologies for analysis of brain architecture, connectivity, and brain-wide gene and molecular level organization.

Difficulty level: Beginner

Duration: 8:38

Speaker: : Trygve Leergard

Course:

Neuronify is an educational tool meant to create intuition for how neurons and neural networks behave. You can use it to combine neurons with different connections, just like the ones we have in our brain, and explore how changes on single cells lead to behavioral changes in important networks. Neuronify is based on an integrate-and-fire model of neurons. This is one of the simplest models of neurons that exist. It focuses on the spike timing of a neuron and ignores the details of the action potential dynamics. These neurons are modeled as simple RC circuits. When the membrane potential is above a certain threshold, a spike is generated and the voltage is reset to its resting potential. This spike then signals other neurons through its synapses.

Neuronify aims to provide a low entry point to simulation-based neuroscience.

Difficulty level: Beginner

Duration: 01:25

Speaker: : Neuronify

This talk describes the NIH-funded SPARC Data Structure, and how this project navigates ontology development while keeping in mind the FAIR science principles.

Difficulty level: Beginner

Duration: 25:44

Speaker: : Fahim Imam

Course:

This lecture covers structured data, databases, federating neuroscience-relevant databases, and ontologies.

Difficulty level: Beginner

Duration: 1:30:45

Speaker: : Maryann Martone

Course:

This lecture covers FAIR atlases, including their background and construction, as well as how they can be created in line with the FAIR principles.

Difficulty level: Beginner

Duration: 14:24

Speaker: : Heidi Kleven

This lesson provides instructions on how to build and share extensions in NWB.

Difficulty level: Advanced

Duration: 20:29

Speaker: : Ryan Ly

Learn how to build custom APIs for extension.

Difficulty level: Advanced

Duration: 25:40

Speaker: : Andrew Tritt

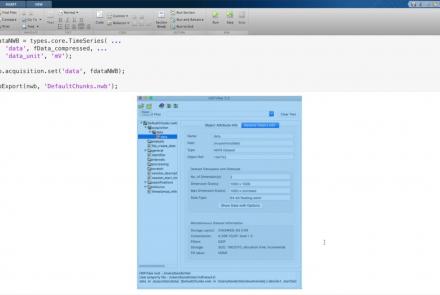

This lesson provides instruction on advanced writing strategies in HDF5 that are accessible through PyNWB.

Difficulty level: Advanced

Duration: 23:00

Speaker: : Oliver Ruebel

This lesson provides a tutorial on how to handle writing very large data in MatNWB.

Difficulty level: Advanced

Duration: 16:18

Speaker: : Ben Dichter

This video explains what metadata is, why it is important, and how you can organize your metadata to increase the FAIRness of your data on EBRAINS.

Difficulty level: Beginner

Duration: 17:23

Speaker: : Ulrike Schlegel

This lecture provides reviews some standards for project management and organization, including motivation from the view of the FAIR principles and improved reproducibility.

Difficulty level: Beginner

Duration: 01:08:34

Speaker: : Elizabeth DuPre

Course:

This lecture covers the description and characterization of an input-output relationship in a information-theoretic context.

Difficulty level: Beginner

Duration: 1:35:33

Speaker: : Jonathan D. Victor

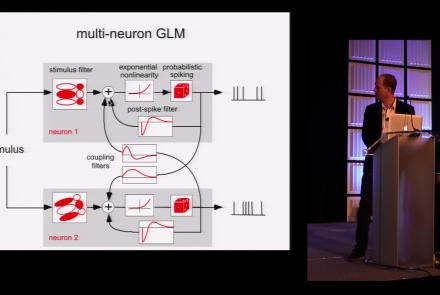

This lesson is part 1 of 2 of a tutorial on statistical models for neural data.

Difficulty level: Beginner

Duration: 1:45:48

Speaker: : Jonathan Pillow

This lesson is part 2 of 2 of a tutorial on statistical models for neural data.

Difficulty level: Beginner

Duration: 1:50:31

Speaker: : Jonathan Pillow

Course:

From the retina to the superior colliculus, the lateral geniculate nucleus into primary visual cortex and beyond, this lecture gives a tour of the mammalian visual system highlighting the Nobel-prize winning discoveries of Hubel & Wiesel.

Difficulty level: Beginner

Duration: 56:31

Speaker: : Clay Reid

Course:

From Universal Turing Machines to McCulloch-Pitts and Hopfield associative memory networks, this lecture explains what is meant by computation.

Difficulty level: Beginner

Duration: 55:27

Speaker: : Christof Koch

In this lesson you will learn about ion channels and the movement of ions across the cell membrane, one of the key mechanisms underlying neuronal communication.

Difficulty level: Beginner

Duration: 25:51

Speaker: : Carl Petersen

Topics

- Artificial Intelligence (6)

- Philosophy of Science (5)

- Provenance (2)

- protein-protein interactions (1)

- Extracellular signaling (1)

- Animal models (6)

- Assembly 2021 (29)

- Brain-hardware interfaces (13)

- Clinical neuroscience (17)

- International Brain Initiative (2)

- Repositories and science gateways (11)

- Resources (6)

- General neuroscience

(45)

- Neuroscience (9)

- Cognitive Science (7)

- Cell signaling (3)

- (-) Brain networks (5)

- Glia (1)

- Electrophysiology (20)

- (-) Learning and memory (3)

- Neuroanatomy (17)

- Neurobiology (7)

- Neurodegeneration (1)

- Neuroimmunology (1)

- Neural networks (4)

- Neurophysiology (22)

- Neuropharmacology (2)

- Synaptic plasticity (2)

- (-) Visual system (12)

- Phenome (1)

- General neuroinformatics

(26)

- Computational neuroscience (195)

- Statistics (2)

- Computer Science (16)

- (-) Genomics (25)

- Data science

(24)

- Open science (52)

- Project management (7)

- Education (3)

- Publishing (4)

- Neuroethics (35)